Part 5 Scaling the System at AR - Part 5 - Message Queue for Scaling team

If you have read some of my previous blog post, you may know that we have been stuck with Rethinkdb for years. Rethinkdb was good database. However, the development was stopped some years ago and there is no sign that it will be continued in the future. We have been following some very active guys in the community and even thought about donating for them. However, all of them have lost their interest in Rethinkdb and decided to move forward with other alternative solutions. Also, as I have already mentioned before in Mistakes of a Software Engineer - Favor NoSQL over SQL, most of our use cases are not suitable with the design of Rethinkdb anymore and all the optimizations that we made are reaching their limit.

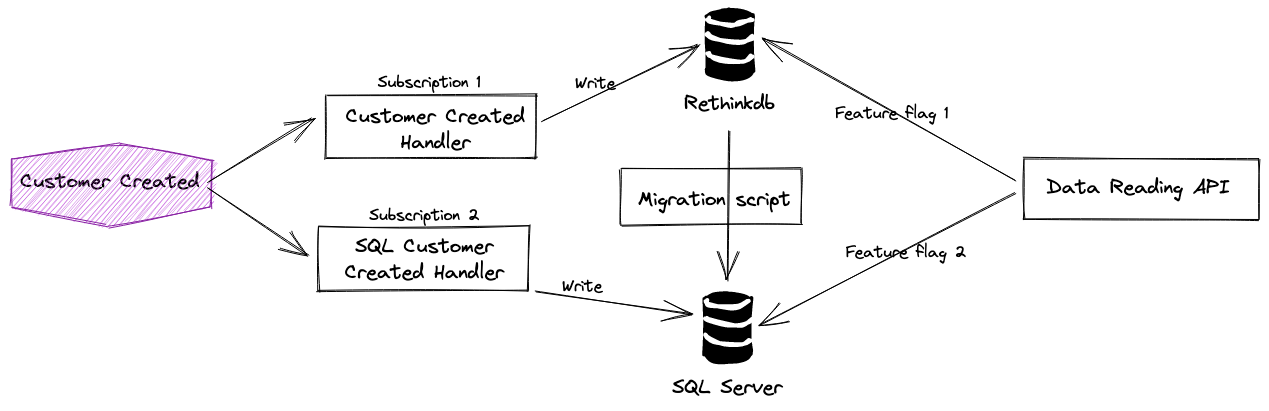

After several discussions and analysis, we decided to move away from Rethinkdb to MS SQL Server. Some requirements that we have to satisfy are

- The user should be able to view and edit the data normally, without any downtime.

- There should be a backup plan for it.

- There should be an experimental period, where we can pick some users, turn on the new database and analyze the correctness of the data.

This can be achieved easily using the Pub/Sub model and Message Queue design described in Scaling the System at AR - Part 5 - Message Queue for Scaling team.